March

16

March

16

Tags

Mind the Gap: Spaced Learning and Dendritic Spines

A lifetime ago, in another country, I had a middle school English teacher nicknamed “Mrs. Again”. She was plump and wrinkled, with the kind of wide-cheeked, broad-nosed face one could find on folksy condiment bottle labels, but nobody ever made fun of her. She was terror incarnate, being the only teacher who gave daily dictation quizzes instead of weekend homework. If you failed, your paper would get a big red “Again!” marked on top; you would stay after school and do the dictation again, and again, and your hands would ache, and you would miss dinner, and your attention would waver just enough for you to undershoot the passing mark again, and your paper would be marked “Again!” again, and you’d just have to do it all over again!

Even then, my classmates and I knew that something was wrong. We learned several dozen new words for dictation in class the day before, and this 45-minute class, coupled with several minutes of review at night (a hardworking bunch we were not), was enough to give many of us perfect recall the next day. However, for those of us who had to do dictation “Again!”, we only made modest improvements after a grueling hour, despite knowing the words we lost points on. In fact, we sometimes forgot words we thought we had down pat even as we corrected earlier mistakes. Assuming that this phenomenon could not be entirely explained by our daftness or anxiety, then, how should we make sense of this learning “blockage”? To know how learning can become more or less effective, we first need to know what “learning” is. Unfortunately, like so many other scientific questions, there are only incomplete and in-progress answers. However, we do know that our brain is responsible (surprising, yes), and we have some good ideas as to why and how that is.

Changes in Dendritic Spines Reflect How We Learn

The brain is a simple organ. Well, not really, but what it does can be summarized (i.e. oversimplified) succinctly: it converts sensory inputs to motor outputs. We sense, and then we act. We can speculate and plan and make choices in our heads, consciously or unconsciously, but the choices will eventually manifest as either actions or the deliberate withholding of them. Dictation, for example, requires us to first hear a sound, and then write out the letter sequence corresponding to the sound. We might struggle with recalling specific letter choices (“Is it ‘defense’ or ‘defence’?”) before writing the word down, but the deliberation has to end in writing eventually as Mrs. Again drones implacably on. To “learn”, in this context, means to perform the sensory-to-motor conversion more efficiently, i.e., to reach the desired motor outputs faster and more often, given a specific range of sensory inputs.

And how does the brain increase its efficiency, exactly? Let me explain, then, with a few pictures.

Below is a plush doll representation of a neuron. It is cute and squishy. Our brain contains billions of them. Neurons, I mean, not dolls.

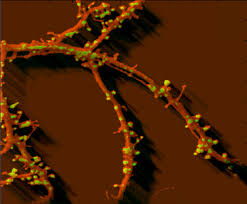

The protrusions on the doll’s “head” represents dendrites, the “inputs” of a neuron. If you zoom in on a real dendrite, however, you would not see the smooth fabric of a doll, but a knotty surface with many small protrusions, like so:

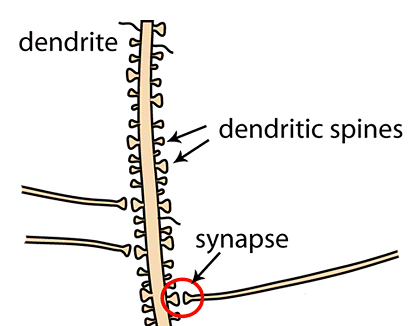

Unlike the plush doll, neurons are always in close contact with their own kind. The small protrusions above are called dendritic spines [1], presumably because they look spiny. These spines are the stubby neuronal fingers that grabs onto other neurons’ axons, which are the “outputs” of a neuron represented above as the doll’s “foot”, to form synapses. Kind of like this:

Learning improvements at the cellular level can be implemented as changes in synaptic strength [2], or how reliably the information transfer between axon and dendrite happens at the synapse, where dendritic spines and axons are in very close proximity. This change in reliability correlates with, and crucially depends on, changes in the spines’ physical shapes and sizes, as well as the biochemical remodeling of the spines’ contents. When the connection between neurons strengthens, the dendritic spines tend to enlarge, and the postsynaptic density—a thick scaffold of proteins supporting the communication apparatus of the dendritic spines—expands, allowing more reliable and frequent transmissions [3]. To understand why learning sessions far apart in time (in Academese: “spaced training”) seem to produce better result than cramming (in Academese: “massed training”) [4], then, we need to know how the timing of these changes in dendritic spines might interact with the timing of repeated training.

Accommodating Dendritic Spine Growth with Gaps in Learning

Although many competing theories for “spaced training advantage” exist, they can mostly be summarized in two hypotheses: for memory formation and retention, adequately distributed spacing between learning sessions is beneficial; alternatively (or rather, at the same time), overly brief spacing is detrimental. “Study-phase retrieval theory” [5], for instance, argues for the benefit of spacing: spaced training is more effective than massed training because each spaced trial elicits recall of a memory formed by the trial before it. (The word “memory” here may look deceptively familiar, but should really be read as “changes in synaptic strength across neurons involved in training”.) In contrast, “deficient-processing theory” [6] suggests that massed training is less effective because some time-consuming processes that are necessary to form memories cannot be fully executed. As is often the case with science, when we consider the role of dendritic spines, existing evidence can offer support for both theories.

The actual spacing between learning sessions that leads to performance improvement has Russian doll characteristics—there are actually multiple “optimal” durations nested within each other, some corresponding to the time scales over which synaptic changes occur. A broad range of spacings—from seconds to days—has been used for spaced training in different animal models, from honeybees to mammals, and were shown to be more effective than comparably timed massed training [4]. While brief intervals as short as a minute may depend on molecules that already exist within the dendritic spines at the time of learning [7], the most commonly reported intervals range from ~5 minutes to 1 hour, which likely suffices to initiate changes in dendritic spines’ molecular composition [4]. Those initiated changes will eventually lead to bigger and better spines over days, in line with observed performance improvements via spaced learning with days-long intervals. In fact, a training regimen incorporating both ~1 hour spacings and day-long spacings was shown to be more effective than using a single set duration [8], suggesting that there are at least two windows of opportunity for learning improvement: one can either step on the accelerator of dendritic spine growth with ~1 hour intervals, or build on a solid foundation with days-long intervals once the spines have fully grown from the previous bout of learning.

While the previous paragraph explains how adequate spacing might be helpful, the evidence in it cannot be used to explain how my classmates seemingly forgot words they got right the first time during Mrs. Again’s dictation quiz retakes (I bet you almost forgot about her, no? So I inserted the reference here to help you practise spaced learning). What might have happened, I think, is this: we know that a memory can be made “transiently labile”, or temporarily open to change, if retrieved or reactivated [9]. Think about how, when using a USB drive or portable hard drive, the computer gives you the option to “Safely Remove Hardware”: if you unplug when files on the portable hardware are being accessed, then you are likely to damage the files. And in massed training that involves repeated recall of existing memory (e.g. words for dictation we learned on the previous day), we might as well be hot-swapping dozens of USB drives on the fly, without ever Safely Removing (i.e. reconsolidating) them, thus “corrupting” what we thought we knew. This would be much less of an issue if the recalled memories were themselves well-consolidated in the first place, but newly formed memories such as the dictation words from a day back seem particularly susceptible to change [10].

A Twist Ending that Nobody Asked For

Well, this is where I am supposed to tie everything together in a neat bow and show off my wit in one last clever turn of phrase. However, I have no idea how to do that, and I am not sure what all this verbiage might actually achieve. Knowing a snippet of how spaced learning might actually work in the brain is, let’s say, “neat”, and might encourage someone somewhere to try learning more efficiently and better his or her life accordingly. But more likely than not, these words will be promptly forgotten by even myself, like the dictation quiz keywords of old. After all, we don’t need better learning techniques to remember that xenophobia and greed are driving the world towards a future reserved for only a happy few, and whatever I write here is no more pertinent to the lives of the others, whose future remains less certain, than the folksy condiment bottle labels I linked to several paragraphs ago. So why am I still here, then? It is far past the time to stop this piece. Nevertheless, I cannot help but keep writing; even if it currently amounts to no more than self-satisfaction, the pen I have been polishing just might prove mightier than the proverbial sword one day. Before the day comes, and so that the day does eventually come, I simply have to write again, and again, and again.

References:

[1] Bosch M, Castro J, Saneyoshi T, Matsuno H, Sur M, Hayashi Y. Structural and molecular remodeling of dendritic spine substructures during long-term potentiation. Neuron. 2014; 82(2):444-59.

[2] Nabavi S, Fox R, Proulx CD, Lin JY, Tsien RY, Malinow R. Engineering a memory with LTD and LTP. Nature. 2014; 511(7509):348-52.

[3] Sheng M, Hoogenraad CC. The postsynaptic architecture of excitatory synapses: a more quantitative view. Annu. Rev. Biochem. 2007; 76:823–47.

[4] Smolen P, Zhang Y, Byrne JH. The right time to learn: mechanisms and optimization of spaced learning. Nat. Rev. Neurosci. 2016; 17(2):77–88.

[5] Braun K, Rubin DC. The spacing effect depends on an encoding deficit, retrieval, and time in working memory: evidence from once-presented words. Memory. 1998; 6:37–65.

[6] Toppino TC, Bloom LC. The spacing effect, free recall, and two-process theory: a closer look. J. Exp. Psychol. Learn. Mem. Cogn. 2002; 28(3):437-44.

[7] Menzel R, Manz G, Menzel R, Greggers U. Massed and spaced learning in honeybees: the role of CS, US, the intertrial interval, and the test interval. Learn. Mem. 2001; 8(4):198-208.

[8] Wainwright ML, Zhang H, Byrne JH, Cleary LJ. Localized neuronal outgrowth induced by long-term sensitization training in aplysia. J. Neurosci. 2002; 22(10):4132-41.

[9] Dudai Y, Eisenberg M. Rites of passage of the engram: reconsolidation and the lingering consolidation hypothesis. Neuron. 2004; 44(1):93-100.

[10] Díaz-Mataix L, Martinez RCR, Schafe GE, LeDoux JE, Doyère V. Detection of a temporal error triggers reconsolidation of amygdala-dependent memories. Curr. Biol. 2013; 23(6):467–72.

You must be logged in to post a comment.