March

31

March

31

Tags

Golden Retrievers, Terriers, and Artificial Neural Networks

Usually when someone tells you that they are studying something, it’d be safe to assume that they interact with whatever it is that they study. So you might be surprised to hear that there are neuroscientists who don’t spend much time manipulating and observing the dynamics within the physical brain of an organism or collecting experimental data. Rather, they can be found manipulating and observing the dynamics of simulated neurons (brain cells). They fall under the category of computational neuroscientists, though to be clear, being an experimental and computational neuroscientist are not mutually exclusive. In fact, drawing from both of these approaches will likely propel the field of neuroscience forward.

Computational neuroscientists can be thought of as neuroscientists who utilize simulations of numerical models on computers to explore how actual brains might be processing information and performing different functions. Models are typically based on what we already know about brain structure (or ‘architecture’ for the computer science fans out there) and function. The models that get validated by experimental data collected from actual neurons in the brain gain recognition and relevance in the field, and they can inform future neuroscience experiments – perhaps even be used to generate predictions for future experiments, or predictions for how neural circuits may behave in particular disease states.

[Aside: Computational neuroscientists can also be interested in making sense of existing experimental data that they didn’t necessarily collect themselves. They can employ different methods of quantitative data analysis and try to figure out whether the experimental data aligns with theoretical models of how the brain works.]

Why do computational neuroscientists use numbers to represent neurons? Conceptualizing how the brain performs its many functions is a tall order because one must reconcile the notion of a high-level computational system with emergent properties (i.e. the brain) with billions of its individual yet interconnected computational components (i.e. neurons). Thinking at the level of a neuron is complicated, let alone thinking about a whole network of interconnected neurons.

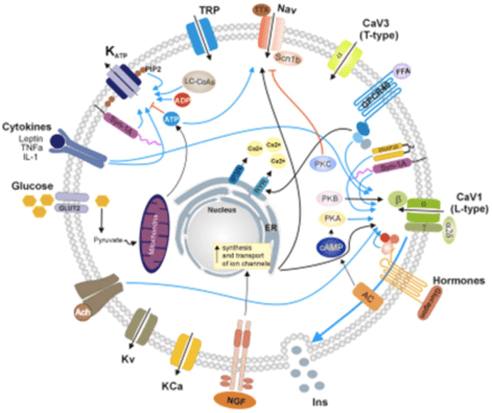

Fig. 1: Schematic of a Neuron

Take a glance at the circular schematic of a neuron in Fig. 1. Even though this almost certainly isn’t the full story, it’s overwhelming, isn’t it? There is a two-layered membrane represented as a string of gray circles; there are different types of channels lodged into the membrane that govern the dynamics of potassium (K+), calcium (Ca2+), and sodium ions (Na+) entering or exiting a neuron; there are hormones interacting with membrane proteins, and much more. Everything that is depicted in the schematic and more influence the activity of a neuron — not only whether it is active, but how active it is. Just like there is a cadence to your voice depending on what you are trying to communicate (anger, suspicion, gratitude…), neurons communicate by propagating electrical signals that correspond to different activity states: high frequency spiking, low frequency spiking, spikes that come in bursts, signals that are dominated by quiescent periods, etc. (Fig. 2).

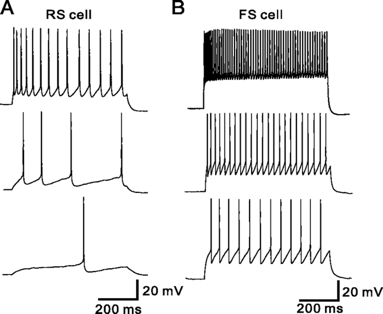

Fig. 2: Different Neural Activity Plots — upward deflections are representative of spikes which are rapid voltage changes that occur in a neuron propagating electrical signals

Now, ignore all the details in the original schematic, and instead of thinking about neurons as being active in a particular kind of way, think of them as being in an active or inactive state at any given time point, namely ON (+1) or OFF (0) (Fig. 3). This oversimplified framework makes it much more manageable to study the dynamics of a group of interconnected neurons in an artificial set up. As far back as the 1940s, Warren McCulloch, a neuroscientist, and Walter Pitts, a logician, published a paper that included this simplified model of a neuron [3]. An artificial neural network (ANN) is essentially a bunch of connected McCulloch-Pitts (MCP) neurons. We can think of MCPs as information processing nodes that are communicating with each other when ON, and we can quantify the strength of their connections by assigning numerical weights to each connection. Adjusting the values of these weights affects the output of the ANN – specifically, whether the output node is turned ON (Fig. 4).

Fig. 3: Graphical Representation of the Activity of an MCP Neuron

Fig. 4: Schematic of an Artificial Neural Network

Let’s find an example to illustrate what each layer of nodes could be accomplishing within this framework, and what constructing this sort of ANN can simulate. One thing that we as humans need to do on a day-to-day basis is learn to recognize and categorize what we encounter in our environments. For instance, we recognize dogs as dogs regardless of whether they are big or small, golden retrievers or terriers, etc.

How can a neural network achieve this capability?

Let’s think of the input layer as nodes that are receiving external sensory information (texture of the dog’s fur, sound of the dog barking etc.), the hidden layers as processing this information in such a way that maps onto a subset of the hidden nodes being ON, and some OFF, and let’s think of the ON state of the output node as mapping onto your recognition of ‘dog.’

What is fascinating about an ANN is that you can feed it different inputs (e.g. pictures of dogs and other animals) and train it such that it learns to recognize dogs as evidenced by the output node turning ON whenever a new, yet unseen dog picture is fed into the network. The basic idea is that you keep presenting the ANN with pictures in order for it to extract certain features and pick out common patterns; thus, one can think of the ANN as learning by example. The network output per iteration is initially inaccurate, for the ANN has not yet learned to recognize dog pictures. However, because we can also provide the ANN with the ‘correct’ output (output node ON if the picture is indeed that of a dog, OFF if not), every iteration, the ANN can compare its output to what the output should be, and calculate the difference between the two, or in other words, the error. This error gets fed back onto the input layer and modifies the weights of the connections between the input and hidden layers. As a result, some connections between nodes get enhanced (high weight) and some are weakened (low weight) for the next iteration. This cycle repeats until error is minimized and the ANN learns to recognize a novel dog picture as a ‘dog.’

ANN architecture can be thought of as a simulation of how pattern recognition and learning can be implemented. This is not necessarily how neural networks in biological systems learn or perform other high-level functions, but it is an example of computational modeling that is inspired by a reductionist approach to understanding the real-life dynamics between interconnected neurons. The brain is the ultimate ‘computer’ that we know of, and to be able to replicate its computational prowess, we need to not only delineate the characteristics of all the different types of neurons as well as their connections and interactions – we also need to build more sophisticated and comprehensive models that account for these characteristics.

Lastly, I would like to leave you with the realization that you were probably already familiar with neural networks prior to reading this blog post: Speech-recognition expert Siri residing in our iPhones employs neural networks, as do machines that are built to identify fingerprints or detect cancerous cells. The common theme that threads these examples together is feature extraction and pattern recognition – if nothing else, remember that artificial neural networks are very good at that!

Ege Yalcinbas is a second-year neurosciences graduate student at UCSD from Istanbul, Turkey. When not in lab, she can be found on stage in the guise of various characters, her most recent one being Ilse from the musical Spring Awakening.

References:

- Hopfield, J. J. (April 1982). Neural networks and physical systems with emergent collective computational abilities. Nat. Acad. Sci. USA, Vol. 79, pp. 2554-2558.

- Ince, Darrel (2011). The Computer. Oxford University Press.

- Marsalli, Michael (2006). McCulloch Pitts Neurons. The Mind Project, Consortium on Cognitive Science Instruction.<http://www.mind.ilstu.edu/curriculum/mcp_neurons/mcp_neuron_1.php?modGUI=212&compGUI=1749&itemGUI=3018>/.

- Schutter, E. (May 2008). Why Are Computational Neuroscience and Systems Biology So Separate? PLOS Computational Biology, Vol. 4 (5).

You must be logged in to post a comment.