April

22

April

22

Tags

Neuromorphic engineering: how electronics are learning from the brain

“As engineers, we would be foolish to ignore the lessons of a billion years of evolution” -Carver Mead

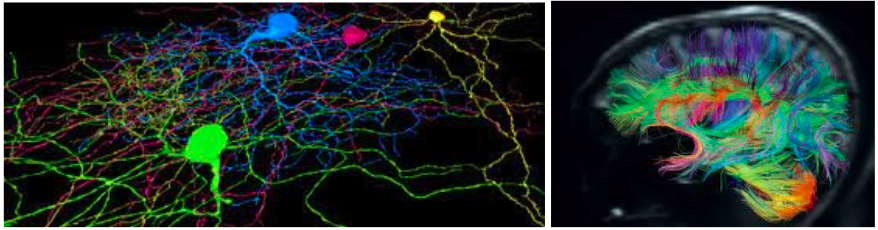

Scientists have been pursuing artificial intelligence that rivals what the human brain can do for centuries. The brain is remarkable in some computer-like aspects; it processes multiple complex tasks in parallel with high efficiency and has exceptionally low power consumption. For instance, the human brain operates around 12 watts, while a lightbulb needs 60 watts and a standard desktop computer requires about 200 watts to remain powered on. Brains can also adapt and learn with an ease that computers are not capable of. Neuromorphic engineering operates on the belief that we can reverse engineer the way that the human brain can efficiently represent information about the world and then exploit that efficiency in artificial systems. These electronic realizations of the biological models of neural systems are done in silico, referring to the silicon in computer chips. They are implemented in chips using thousands of transistors- this is known as Very Large Scale Integration (VLSI) technology. Neuromorphic engineering is useful for performing human-like tasks. Many examples of these devices already exist such as chemosensors (electronic noses) [1], dynamic visual trackers [2], and hearing with cochlear implants [3]. Besides implementing neural processing systems into hardware for practical applications, neuromorphic chips can be used as a tool to study basic neuroscience, by allowing scientists to create and modify artificial brains such as the first biologically realistic silicon neuron [4] and realistic models of the mammalian retina [5]. Thus, neuromorphic engineering takes many ideas and inspiration from neuroscience and can reapply that knowledge back to neuroscience

How the brain outperforms our best computers

Studying how the brain works is useful for engineers. Not only does the brain effortlessly solve problems that we want computers to solve too like speech understanding, learning, etc, but neurobiology also holds many engineering lessons as well. The brain is not like any human built machine: it is self-configuring, reliable despite having unreliable/noisy components (i.e. neurons and glia) and solves complex problems simultaneously, known as parallel processing. As a computational device, the brain is quite remarkable. Even though the computing elements of the brain are slow and unreliable, the nervous system easily outperforms today’s most powerful computers in many computational tasks like vision, audition, and motor control, despite a vast amount of resources dedicated to research and development of information and communication technologies

The reason for this performance gap between the fastest and latest computers and the brain’s robustness in computations for real world tasks are not fully understood. Many neuroscience fields are still working to understand “the neural code” – how the brain represents information from the external world. As neuroscientists get closer to understanding the algorithms the brain implements, engineers can work to implement those algorithms through models and on chips, in silico.

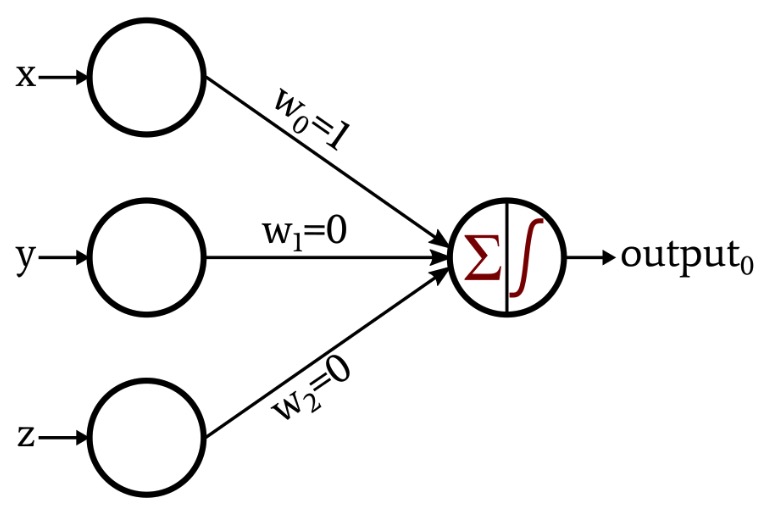

It is clear,however, that the style of computation carried out by a computer is fundamentally different than that of a brain. For instance, computers use precise digital operations, true/false logic (Boolean logic), and clocked operations which are operations that reference the time scale of the outside world [6]. The nervous system carries out robust and reliable computation using a hybrid of analog and digital unreliable components and operates on its own time scale, meaning it is un-clocked. Neurons leverage distributed, event driven, collective, and massively parallel mechanisms [6]. The brain adapts, self-organizes, and learns- something computers cannot do. Additionally, the neurons in the cortex are organized in a special way. It is suggested that populations of neurons perform collective computation in individual clusters, transmit the results of this computation to neighboring clusters, and send the relevant signal through feedback connections to and from other areas in the cortex [6]. The structure represented in neurons looks more like statistical inference, rather than Boolean logic, in a graphical architecture type of model.

How can we build a brain with electronics

Eventually, our ability to build electronics will be constrained by semiconductor scaling long before we can have robust brain-like computers [6]. How can biology show us how to build better, and more scalable computers? While brains are built from hydrocarbons and aqueous solutions and digital computers are built from silicon and metal, both represent information using physical quantities. Digital computers transmit electrical signals just like brains do Both systems require solutions to inherently noisy communication. Both brains and computers “compute” by selecting an output based on the current state and applied input. Overcoming the fundamental differences between the two is not so simple though. Fundamental to electronics are multipliers and adders doing the mathematics [6] but the brain uses physical phenomena, like light and sound waves, as fundamentals and can adapt and learn. Neither is suitable for the other domain: neurons are bad at the math computers do and in order to adapt and learn, using multipliers and adders are bad choices. The fundamental way information is represented in the two means that each is better at their respective domains, but if we want to build more brain-like computers, we will have to learn the code behind neurons and understand their structure. The structure of neurons allow parallel spike signaling that is asynchronous all across various brain regions. Neurons also allow for adaptation (e.g.a brain region can be co-opted for a new function if necessary).

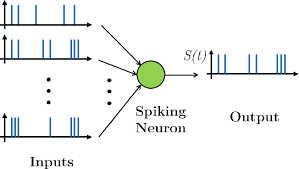

The inputs and outputs are both spike trains

In order to emulate a brain, neuromorphic computing aims to reproduce the principles of neural computation. Electronics can use transistors more efficiently on a chip based on brain architecture or they can create more robust and flexible circuitry. Another implementation of neuromorphic chips is using spiking signals like a brain would to represent and process signals. A spiking neural network looks similar to the architecture of an artificial neural network but instead of taking in digital quantities as an input, it takes in a spike train representing a series of action potentials a neuron uses to represent information. Spiking neural networks are a useful paradigm for solving complex pattern recognition and sensory processing tasks that are otherwise difficult to tackle using standard machine vision and machine learning techniques[7]. As neuroscientists gain a better understanding of the neural code of spiking, neuromorphic electronics will also be able to progress. However, spiking neural networks are not an end of itself; neuromorphic chips still use 100 to one million times the amount of power of a neuron itself [7]. To keep this in context, current neuromorphic chips are one billion times more efficient than standard microprocessors [8] thus significant progress has been made in recent years.

A brief history into neuromorphic engineering

Research has quickly picked up in the last couple of decades with significant strides being made each year. In 1991 the first bio realistic neuron was made [4]. In 2006, researchers at Georgia Tech created one of the first cases of a silicon programmable array of neurons[9]. In June of 2012, researchers at Purdue University presented a paper on the design of a neuromorphic chip that they claimed worked similarly enough to a neuron that it could be used to test methods of reproducing the brain’s processing [10]. Neuromorphic engineering has recently been gaining lots of research dollars as well. The Human Brain Project recently funded a project with implications for neuromorphic engineering that is attempting to simulate a complete human brain in a supercomputer using biological data. The three primary goals of the project are to better understand how the pieces of the brain fit and work together, to understand how to objectively diagnose and treat brain diseases, and to use the understanding of the human brain to develop neuromorphic computers.

Industry is also taking advantage of the recent technology. In 2017, IBM created a neuromorphic chip called TrueNorth [11] and Intel unveiled its neuromorphic research chip, called “Loihi”[12]. The first self-learning neuromorphic chip was published in October 2019 from a Belgium based company IMEC. It has the capability to compose music, particularly in the style of old Belgian and French flute music since that was the music from which the chip learned the rules of composing music[13].

Future of neuromorphic engineering

Currently, researchers are still leveraging VLSI technology and using the nonlinear current properties of transistors to emulate the nonlinearities of brain computation. There is a major effort towards understanding how neuromorphic models, in silico models of what the brain is doing, can be represented on a chip. With new nanotechnologies and better VLSI processes, our ability to tackle computer’s problems of high power usage and device unreliability can only get better. Neuromorphic modeling shows us that we aren’t close to the computational potential of computing in silicon[6] but the problem still remains today that we cannot build biologically inspired systems when we don’t understand the biology behind information representation. The future of neuromorphic engineering still rests on neuroscience’s discoveries. When we are able to understand what the computational principles used by the cortex are and how those algorithms are processing information, then we can learn to implement them in hardware. This will allow researchers to develop radically new paradigms and construct a new generation of technology that synergizes the capabilities of the brain with the strengths of silicon technology.

References

[1] N. Katta et al., “Analysis of biological and artificial chemical sensor responses to odor mixtures,” SENSORS, 2013 IEEE, Baltimore, MD, USA, 2013, pp. 1-4, doi: 10.1109/ICSENS.2013.6688196.

[2] R. Jacob Vogelstein, Udayan Mallik, Eugenio Culurciello, Gert Cauwenberghs, Ralph Etienne-Cummings; A Multichip Neuromorphic System for Spike-Based Visual Information Processing. Neural Comput 2007; 19 (9): 2281–2300. doi: https://doi.org/10.1162/neco.2007.19.9.2281

[3] R. Sarpeshkar: Brain power – borrowing from biology makes for low power computing – bionic ear, IEEE Spectrum 43(5), 24–29 (2006)

[4] M. Mahowald, R.J. Douglas: A silicon neuron, Nature. 354, 515–518 (1991)

[5] M. Mahowald: The silicon retina, Sci. Am. 264, 76–82 (1991)

[6] Diario, C. Why Neuromorphic Engineering? https://homes.cs.washington.edu/~diorio/Talks/InvitedTalks/Telluride99/sld001.htm. Accessed 15 Apr. 2021.

[7] Indiveri, Giacomo. “Neuromorphic Engineering.” Springer Handbook of Computational

Intelligence, edited by Janusz Kacprzyk and Witold Pedrycz, Springer Berlin Heidelberg,

2015, pp. 715–25, doi:10.1007/978-3-662-43505-2_38.

[8] “Silicon Chips as Artificial Neurons |.” Medgadget, 5 Dec. 2019, https://www.medgadget.com/2019/12/silicon-chips-as-artificial-neurons.html.

[9] Farquhar, Ethan; Hasler, Paul. (May 2006). A field programmable neural array. IEEE International Symposium on Circuits and Systems. pp. 4114–4117. doi:10.1109/ISCAS.2006.1693534. ISBN 978-0-7803-9389-9. S2CID 206966013.

[10] Sharad, Mrigank; Augustine, Charles; Panagopoulos, Georgios; Roy, Kaushik (2012). “Proposal For Neuromorphic Hardware Using Spin Devices”. arXiv:1206.3227 [cond-mat.dis-nn]

[11] Modha, Dharmendra (August 2014). “A million spiking-neuron integrated circuit with a scalable communication network and interface”. Science. 345 (6197): 668–673. Bibcode:2014Sci…345..668M. doi:10.1126/science.1254642. PMID 25104385. S2CID 12706847.

[12] Davies, Mike; et al. (January 16, 2018). “Loihi: A Neuromorphic Manycore Processor with On-Chip Learning”. IEEE Micro. 38 (1): 82–99. doi:10.1109/MM.2018.112130359. S2CID 3608458.

[13] Bourzac, Katherine. “A Neuromorphic Chip That Makes Music”. IEEE Spectrum. October 1,2019.

You must be logged in to post a comment.