October

27

October

27

Tags

The Language and Psychology of Modern “Cults”

We use the term “cult” loosely in today’s colloquial language. Ask around, and you might hear the opinion that people who religiously attend expensive spin classes or CrossFit are in a cult. MLM’s (multi-level marketing schemes) are a cult. Academia is a cult! As a member of the cult of academia, I promise I am here willingly. But is being manipulated in some way requisite for cult membership? Must you need to be removed from general society to be considered a victim of cult-hood, or are there cult members calmly navigating through life next to us each day? Moreover, what causes someone to join and remain in a cult? With such a wide range of “cultish” activities in today’s world, some of which are certainly innocuous, it becomes important to assess the hallmark techniques and qualities of bona fide cults and attempt to demarcate the line when groupthink behavior becomes toxic. In 2021, author Amanda Montell set out to do just that in her book “Cultish: The Language of Fanaticism.” She aimed to investigate community building tactics, based in linguistics and psychology, used by cults and cult-like groups.

Exclusive Language

First and foremost, unique language was observed as a common thread in cults and cult-like groups. Oftentimes, exclusive groups or organizations will have a specialized vocabulary or acronyms with which they refer to concepts that hold significance to them. In academic science, this is a common concept – subdisciplines of scientific research commonly use language which is foreign to most. Whether a term is an acronym for a chemical reagent, a niche technique, or a key structure located deep within the brain, it is easy to admit that the words we utter each day would not make sense to those who do not work in our field. This applies to any organization or club one might join. Montell observed that this creates a sense of community; in each small microcosm of society, distinct jargon fosters a sense of inclusion, uniqueness, and knowledge. In tandem, this acts to exclude those on the outside. During an interview with a former member of 3HO (a Kundalini Yoga group), it is revealed that members of this group were taught to speak with a distinct vocabulary and style, reciting mantras to manifest living life at a higher vibration. They feared misbehaving, lest they return in the next life at a lower vibration. In this group, members were also stripped of their individual names and assigned new ones by their leader. Interestingly, they all shared the same last name “…like one big family” [1]. What must be acknowledged is that, in this scenario, the very same language used to create a tight-knit community was being weaponized against its members to keep them in line. This type of targeted linguistic attack would not make sense to just anyone but could be devastating for those they were aimed at. Because of the exclusivity of the cult-like groups – think “us versus them” – members often feel cut off from their former circles. This becomes dangerous when they find themselves in a frightening situation and have nowhere to turn, as non-members may not understand the weight behind their words.

For those that have seen the popular series The Vow, the cult NXIVM may come to mind. This now-infamous cult – a scheme wrought with extortion and sexual abuse – started under the guise of a leadership summit [2]. Their premier product was indeed a group of courses known as the Executive Success Program (dubbed ESP). Attendees paid exorbitant amounts of money to be bombarded with rhetoric and niche terms that might help them better contextualize and conquer their professional lives to become more elevated versions of themselves. If they committed to taking all the courses, they could advance on the ‘stripe path,’ where they’d gain a wearable sash to advertise their standing in the group. The workshops taught attendees how to prevent disintegrations – negative thoughts and feelings that prevented their path to enlightenment [3]. Unsurprisingly, resistance of feedback from superiors in NXIVM, known as proctors, was a sign of disintegration.

Under guidance of founder Keith Raniere, who was referred to by members as Vanguard, ESP served as a front to recruit and engage members and gain capital for more devious purposes. Those who initially joined NXIVM to gain confidence and network might soon find themselves being recruited to other groups under its umbrella, some of which were segmented by gender. The slippery slope is best exemplified by the experience of one former member who found a community in NXIVM, including her husband and best friends, before being invited into a secret group where she was required to act as a ‘slave’ to her close friend – now her ‘master’. Her promise to her community was sacred to her, and though she was uncomfortable, she obeyed her ‘master’. To further coerce members to remain silent, damaging collateral information was collected on each ‘slave’ [2]. Ultimately, each member was even branded with a symbol of her membership (alongside many other terrified women – forging a frightening bond through the trauma). The pressure this member felt to conform to the role her cult’s language dictated for her is something non-members cannot be expected to fathom. For far too long, she was afraid to speak up. Her entire world existed within NXIVM, due in part to the manipulative techniques utilized by its leadership.

The Need to Belong

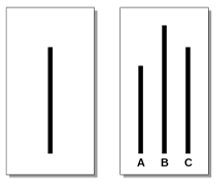

Language, as powerful as it is, only scrapes the surface of understanding how cults can reshape people’s way of thinking. Humans are innately social and crave acceptance and community. The community building aspect of jargon-laden language is accentuated by several other measures taken by cults: dress codes and/or uniforms, communal living, and dating within the group [1,2]. These practices serve to forge bonds of comradery within groups by creating a peaceful state of sameness. To contextualize this, it is helpful to reflect on classic psychological experiments that aimed to better understand interpersonal influence. In 1951, researchers conducted a test of human behavior designed to assess external influence of strangers on individual performance in a simple task [4]. Participants were presented with a variety of lines differing in length (as in Fig. 1) and asked to identify which of the labeled lines (right) was longer than the reference line (left). While the obvious answer is B, this is not what most participants responded. Remarkably, over the course of 12 trials, 75% of participants replied that one of the incorrect lines was longer at least once. How could this be? It was due simply to the fact that other ‘participants,’ who were planted by the experimenters, all uniformly provided the wrong answer prior to the actual participants’ chance to reply [4]. Thus, participants went against their better judgment, ignoring what was printed before them, to instead provide an answer that matched the folks’ around them. Even without a charismatic leader’s direct influence, humans show a tendency to fall into line with others.

“Brainwashing?”

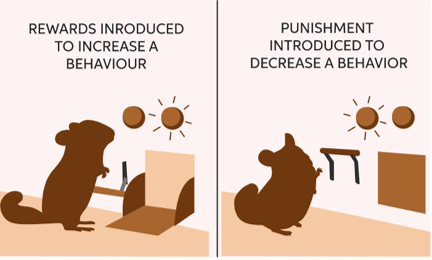

When you think of cults, the concept of brainwashing probably comes to mind. The American Psychological Association defines “brainwashing” as “a broad class of intense and often coercive tactics intended to produce profound changes in attitudes, beliefs, and emotions. Targets of such tactics have typically been prisoners of war and members of religious cults” [5]. Although the term brainwashing may sound like science fiction, and experts tend to dissuade usage of the term, the aforementioned coercive tactics are not uncommon or fantastical. Oftentimes, cult recruits are ‘love-bombed,’ or given positive reinforcement for their actions toward becoming a part of the group [6]. Consistent positive reinforcement, in tandem with nuanced language and community support, plays on the same innate human need to belong that would lead one to assimilate to a group of peers providing an obviously incorrect answer [4]. On a longer timescale, one could think of this as a form of conditioning. In the fields of psychology and neuroscience, conditioning broadly entails a training of association. The principle of classical conditioning may first come to mind, but that is not what happens in cultish environments – Pavlov’s dogs learned to anticipate food at the sound of a bell, but neither of those events were in their control, they merely salivated at the thought of their dinner [7]. In the scenario of ‘love-bombing’, cult recruits are rewarded for acting in accordance with the group’s values. This falls under the purview of operant conditioning, a scenario where specific actions are met with either positive or negative

reinforcement (Fig. 2, [8]). When a recruit is taken under the wing of a cultish group, they will receive positive reinforcement for actions that jive with the group’s mission, cementing a feeling of belonging and comradery while playing to the ego. Conversely, actions that oppose or challenge the beliefs or rules of the group will be met with negative reinforcement or punishment. Oftentimes, this comes at a later stage, after the leadership has built trust with members. This may lead to a fear of being targeted, punished, or extricated from a group that members have learned to rely on. Unsurprisingly, this can be enough to squash unwanted behaviors, keeping followers in line.

Am I in a Cult?

Although it is hard for the average person to imagine being tempted to join a cult, cultish techniques do indeed seem to permeate our normal lives. At the gym, at work, or amongst friends – humans have historically adjusted their behavior to impress and coexist with those around them. We all strive for approval and comradery in our chosen circles, and most of the time, it does not lead to abuse or cultish dalliances. Alas, vulnerability is a strong force that may lead even the most logical individuals to yield to manipulative groups. The power of language, community building, and psychological conditioning cannot be discounted – perhaps it is no wonder that so many of today’s most popular gyms, brands, and such activities are said to have “cult-like” followings.

Citations

[1] Montell, A. (2021). Cultish: The Language Of Fanaticism. Harper Wave.

[2] Buhler, V., & Varela, R. (Executive Producers). (2020, August). The Vow [TV series]. HBO.

[3] Berman, S. (2022). Don’t call it a cult: The shocking story of Keith Raniere and the Women of Nxivm. Penguin Canada.

[4] Asch, S. E. (1951). Effects of group pressure upon the modification and distortion of judgment. In H. Guetzkow (ed.) Groups, leadership and men. Pittsburgh, PA: Carnegie Press.

[5] American Psychological Association. (n.d.). APA Dictionary of Psychology. American Psychological Association. Retrieved October 18, 2022, from https://dictionary.apa.org/brainwashing

[6] Halperin, D. A. (1982). Group processes in cult affiliation and recruitment. Group, 6(2), 13–24. https://doi.org/10.1007/bf01456447

[7] Yamamoto, T. (2009). Classical Conditioning (Pavlovian Conditioning). In: Binder, M.D., Hirokawa, N., Windhorst, U. (eds) Encyclopedia of Neuroscience. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-29678-2_1067

[8] Skinner, B. F. (1937). Two types of conditioned reflex: A reply to Konorski and Miller. The Journal of General Psychology, 16(1), 272-279.

Cover image © Verena Daniel

Pingback: Exclusive: Hamas politburo chief says Israel in worst situation ever – BIG BROTHER in the 21. Century