Tags

Noise vs song, how are naturalistic stimuli processed in the brain?

One problem encountered in researching sensory systems is that classical stimuli used to probe a sensory system are often not representative of what that system might encounter in the real world. Furthermore it has been difficult to explain the response of neurons to such naturalistic stimuli (such as natural scenes, faces, or speech) based solely on the data collected from their responses to experimental stimuli (such as drifting gratings or various noise stimuli). Essentially, when attempting to characterize the response properties of neurons investigators frequently find that the response properties are stimulus-dependent beyond simple presence/absence or linear strengthening/weakening.

Figure 2a. STRF excitatory bandwidth is stimulus-dependent. a, Song STRFs (left) and noise STRFs (right) for three representative MLd neurons. Song and noise STRFs of the neuron in the top row are highly similar. The neurons in the middle and bottom rows have song and noise STRFs that differ in their spectral and temporal tuning.

Woolley et al tackle this problem of stimulus-dependence in the auditory system of the zebra finch. In the zebra finch certain auditory midbrain neurons respond to varying frequency-intensity pairings of sound differently (as measured by their spectrotemporal receptive fields) if the sound is a pure tone or noise stimulus versus a song (Fig 2a, from Woolley et al 2011). In the figure the three example neurons have different spectrotemporal receptive fields (STRFs) depending on whether the stimulus presented was a noise stimulus or a song stimulus. Inspired by these different STRFs Woolley et al set out to determine the origin of the stimulus dependence.

The the stimulus-dependence of the STRFs is statistically significant and two hypotheses have been developed to explain how the different STRFs might arise. One hypothesis is that individual neurons modulate their response properties based on the varying statistical properties of the stimulus. The other hypothesis is that the response properties of individual neurons are static but also nonlinear.

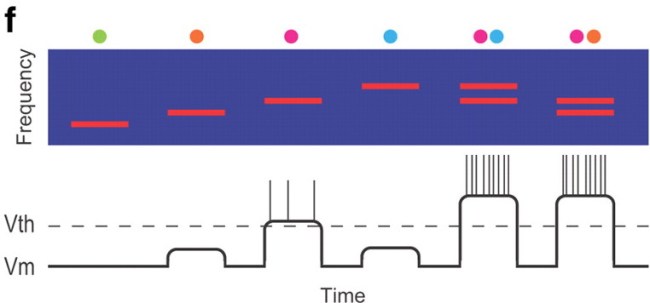

Figure 3f. Dots at the top of the spectrogram show the location of each stimulus in the neuron’s receptive field. A diagram of changes in membrane potential and spiking activity (vertical lines) during each stimulus is shown below.

To test these hypotheses Woolley et al recorded from zebra finches and chracterized the classical and extra-classical receptive fields of neurons (CRFs and eCRFs respectively). Woolley et al “define the CRF as the range of frequency–intensity combinations that modulate spiking significantly above or below the baseline firing rate,” and “the eCRF as consisting of frequency–intensity combinations that do not modulate the firing rate when presented alone, but do modulate the firing rate during simultaneous CRF stimulation” (Fig 3f, from Woolley et al 2011). Those more familiar with the visual system might see this as a kind of auditory equivalent of the ‘center-surround’ receptive fields. Woolley et al observe that a major difference between noise and song is that in noise energy is confined to a narrow frequency band while in song higher energy tends to occur in neighboring bands (Fig 3a, from Woolley et al 2011). This suggested that eCRFs might be responsible for the different response properties between noise and song.

Figure 3a. Correlated stimuli could modulate neural responses by recruiting subthreshold inputs. Song and noise stimuli have different correlations. Spectrograms of 2 s samples of song (top) and noise (bottom) are on the left and 60 ms samples of song and noise are on the right.

Indeed when probing neurons with paired tones, Woolley et al found that eCRF properties were strongly correlated with the properties of the stimulus-dependent STRFs. Furthermore certain neurons displayed a broader STRF in response to song than noise, and these neurons reliably displayed excitatory eCRFs, whereas inhibitory eCRFs seem to have no impact on STRFs. Using these findings Woolley et al develop a model with neurons that have subthreshold tuning, spiking threshold, and excitatory eCRFs, inhibitory eCRFs, or CRFs. They also find that their model recapitulates their observation that eCRFs are needed for stimulus-dependent STRFs and that excitatory eCRFs correlate with broader song STRFs. This strongly suggests that the observed stimulus-dependent STRFs can be well accounted for by extra-classical receptive fields.

In summary, in zebra finch auditory midbrain neurons observed stimulus-dependence of STRFs in noise vs song can be accounted for in large part by differential activation of extra-classical receptive fields because in noise the energy is confined in a narrow frequency band while in song higher energy appears in neighboring bands.

These findings, coupled with similar findings in other species, suggest that stimulus-dependence can be accounted for by extra-classical receptive fields. In addition while it might be possible for neurons to vary their response properties based on the statistics of the stimulus (input), such an explanation does not need to be invoked to explain stimulus-dependence over short timescales. Furthermore these findings provide more evidence that stimuli that are optimized to maximally excite classical receptive fields fail to capture the full range of neuronal response properties.

Please join us for the fourth installment of the 2012-2013 Neuroscience Seminar Series at 4 pm on Tuesday, October 23th in the CNBC Large Conference Room, as Sarah Woolley shares her work on the neural coding of vocalizations in auditory scenes.

Tom Gillespie is a first year in the Neurosciences PhD program. For his first rotation, he is studying the visual system with Ed Callaway at the Salk Institute.

Schneider D.M. & Woolley S.M.N. (2011). Extra-Classical Tuning Predicts Stimulus-Dependent Receptive Fields in Auditory Neurons, Journal of Neuroscience, 31 (33) 11867-11878. DOI: 10.1523/JNEUROSCI.5790-10.2011

You must be logged in to post a comment.