September

12

September

12

Tags

Your Mind on Trial

The British television series Black Mirror has, at times, been disturbingly prophetic. In season 2’s The Waldo Moment, a crude comedian runs for Parliament to disparage the system only to find himself a front-runner. In April 2019 Volodymyr Zelensky, a comedian who had previously played the role of Ukranian President on a popular TV show, was elected President of Ukraine with over 70% of the vote. In the episode Be Right Back, a woman brings back an artificial version of her boyfriend who was killed in a car accident. Drawing from his social media accounts, his personality and stature were eerily reproduced, comforting his living girlfriend. Leaving the physical body behind for a chatbot, the app Replika was founded to do just that, allowing users to essentially talk to themselves (to truly fulfil the prophecy the first iteration was made to mimic the founder’s boyfriend who was also killed in a car accident).

Left: Waldo’s campaign for office. Right: TV Promo for Servant of the people in which the current president of Ukraine played the president of Ukraine.

In a plot reminiscent of the 90’s thriller I Know What You Did Last Summer, the Black Mirror episode Crocodile follows our protagonist as she tries to hide from a dark past that comes back to bite. A detective on the case utilizes a recaller, a small device placed on the witness’s head which allows her to view people’s memories on a small screen as they recall them. The implications of such a device existing are profound; our current concerns about surveillance will seem trivial in the face of a device that could make our internal thoughts no longer ours alone. The interests of the military and law enforcement in this technology would be understandably limitless. Such a device may not be feasible any time in the near future, but given the series’ track record at foreshadowing events in the real world, it’s not unreasonable to imagine such a device coming into being.

The recaller being placed on a witness in Crocodile

Challenge 1: Technology

The device shown in the episode appears to be a single recording device placed near the temple of the subject. Suffice to say, the recaller accessing only a single channel of brain activity could provide almost no information about any underlying brain activity and certainly not recreate what the subject is remembering. The technology to do so, however, does exist in varying forms and if we assume continuous technological progress, we can imagine a qualitatively similar device being developed. While the recaller wouldn’t pan out, if we were a detective in a (dystopian) future, we could certainly make some improvements.

For starters, we need to record data from many neurons and be able to distinguish among them. Within limits, the more neurons we can (independently) record from, the more information we can glean. Not too long ago it was a big deal for neuroscientists to record from more than a few independent neurons. One commentary suggests that the number of neurons available to neuroscientists doubles every 7.4 years, a phenomena dubbed Stevenson’s Law (an ode to Moore’s law which predicts a doubling of computing power every 2 years, Stevenson et al. 2011). Today, modern electrodes can record from hundreds to thousands of neurons at once. We will of course have to cross the bridge of using these devices on humans, which may be insurmountable without the decimation of modern ethics. Let’s imagine we achieve this: a device that can seamlessly record from 10,000 neurons anywhere on the surface of the brain, which we will dub the recaller2. We will also assume this hypothetical device will (somehow) be minimally invasive (eg. no worse than giving blood in terms of discomfort, risk, and recovery time) so that it can be utilized with impunity.

Stevenson’s Law: the number of simultaneously recorded neurons will double approximately every 7.4 years. Right examples of modern recording technologies including the microelectrode (Utah) Array and Calcium Imaging (Stevenson et al. 2011).

Stevenson’s Law: the number of simultaneously recorded neurons will double approximately every 7.4 years. Right examples of modern recording technologies including the microelectrode (Utah) Array and Calcium Imaging (Stevenson et al. 2011).

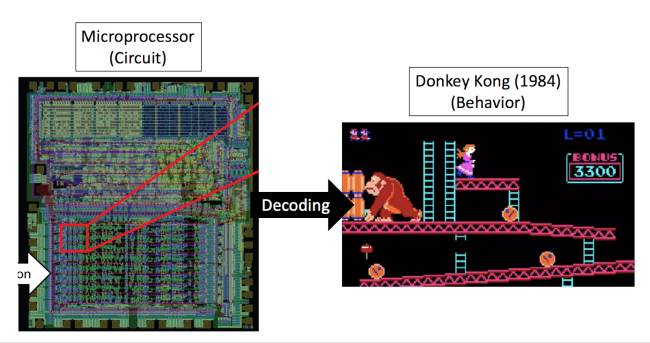

Challenge 2: Technique

Having the recaller2 alone would not be enough to see into someone’s thoughts. It is a fallacy to think that most neuroscience objectives are primarily data-limited, ie. once the data comes in, understanding will flow after. An infamous study titled Could a Neuroscientist Understand a Microprocessor? found that despite knowing every connection in a microprocessor (read: it’s connectome) and the state of every transistor (read: neuron), determining how any transistor maps onto the behavioral output (the visual display of the Donkey Kong video game) using conventional neuroscience techniques (eg. receptive field estimation) could not be determined (Jonas & Kording, 2012, further discussion here). This is partially due to the fact that they were trying to map from an arbitrarily specific unit (the circuit) onto a behavior (software) that is functionally independent to the specifics of the circuit. In other words, no direct mapping between the circuit and the display exists.

Recording from the circuit for Donkey Kong (Left) can not be used to predict the behavior (Right) of the game.

A similar pattern would emerge if we tried to view someone’s memories as they recall them. It is widely held that long term memories are (at least initially) stored in the connection patterns of the seahorse-shaped brain region known as the hippocampus. Unfortunately for our theoretical recaller2, the meaning of each cells firing would, like the microprocessor, appear arbitrary and unconnected to any memory we were recalling. No direct mapping would exist. This is because the information represented by each cell is tied to its connections to the rest of the brain, which in the hippocampus is random by design.

Fortunately, we may have a brain area whose activity can map directly onto our memories. An efficiently built system should avoid redundancies by utilizing the same hardware to represent things both perceived by our sensory organs (eyes/ears) or imagined. Indeed, converging evidence suggests that visual imagery works in much the same way as sight and that we can decode what is being imagined from brain activity.

Experimentally, decoding memories from visual areas has been shown convincingly. Early experimenters first had participants view oriented bars on a computer screen while patterns of neural activity were recorded from their visual cortex (see below). A model was then constructed to predict the orientation of the bars someone was seeing only using their neural data. When participants were shown bars they had to memorize over a long delay period, the researchers were still able to determine which orientation they were thinking of when nothing was on the screen. This offered some of the first evidence that a memory could be extracted from activity in visual areas of the brain (Frank & Tong, 2009). Moving beyond simple features to more real world objects, recent work has demonstrated that entire object categories of imagined stimuli (e.g. a leopard) can be decoded from brain activity (Horikawa et al. 2017). While these demonstrations are limited to participants imagining simple features like orientations or single objects, they are a strong proof of principle that imagined or remembered features can be decoded in the same manner as when they were originally perceived.

Left: Orientation presented to participant, Right: reconstruction of orientation participant is holding in mind during memory delay (Personal).

Importantly, relative to the recaller2, the visual reconstructions were achieved using a slow and indirect measure of brain activity (functional MRI). This is to point out that when modern neuroscience techniques typically reserved for animal models are utilized on humans, we can expect much higher-resolution reconstructions to be achieved. For example, while mice can only discriminate between oriented gratings that differ in orientation by at least 20 degrees, oriented gratings separated by as little as .3 degrees could be decoded from the neural activity of ~20k recorded neurons in mouse visual cortex (Stringer et al., 2019pp). This suggests that we will be able to achieve much higher precision images from humans than currently demonstrated once the recaller2 becomes available.

Challenge 3: Imagination

The practical use of the recaller2 is hard to imagine because we don’t really have any parallels in today’s world. Presumably, whoever is being interrogated doesn’t want you to see anything incriminating. In Crocodile, the detective tries to cue the memories of witnesses by triggering associations, in one case opening a beer to trigger memories of a city street. Memories are inherently associative and their recall is often involuntary. The so-called Ironic Process Theory suggests that attempting to not think about something, eg. a purple elephant, will only cause you to think of it more. (For those who remember the early internet meme “The Game”… you just lost). So presuming you can’t not think about some crime you committed when appropriately cued, would we really be able to see it from your brain activity?

Some interesting recent work suggests that our mental imagery may be more cartoonish or gist-like relative to our original perception (Breedlove et al. 2018pp). Our memories of events are likely formed from our high-level experience of events, and so it is unlikely that any details we were not specifically paying attention to would be available. Incidentally in Crocodile, the detective is looking for details in the background of memories which would likely never have found their way into your memory in the first place (ala The limit does not exist from Mean Girls). How we would go from a gist-like reconstruction of someone’s memory to evidence of said crime is an open question, especially as courts are accustomed to using evidence with clear-cut implications like DNA evidence. A 1923 case, Fyre v. United States, ruled that scientific evidence must be “sufficiently established to have gained general acceptance in the particular field in which it belongs”. It therefore follows that the recaller2 will likely have to gain substantial credibility in academic domains before it will find its way into the justice system.

Looking Forward

While this was a hypothetical exercise, it may not remain that way for long. Private money has begun to move into this space, most notably Elon Musk’s venture Neuralink, which has long term goals of allowing humans to directly interface with AI using a device similar in nature to the recaller2. Already, they have made substantial progress on the technology end in creating a device that can record from many neurons while remaining minimally invasive. It may only be a matter of time before these hypotheticals become tangibles.

Works Cited:

[1] Jonas, Eric, and Konrad Paul Kording. “Could a neuroscientist understand a microprocessor?.” PLoS computational biology 13.1 (2017): e1005268.

[2] Stevenson, Ian H., and Konrad P. Kording. “How advances in neural recording affect data analysis.” Nature neuroscience 14.2 (2011): 139.

[3] Harrison, Stephenie A., and Frank Tong. “Decoding reveals the contents of visual working memory in early visual areas.” Nature 458.7238 (2009): 632.

[4] Horikawa, Tomoyasu, and Yukiyasu Kamitani. “Generic decoding of seen and imagined objects using hierarchical visual features.” Nature communications 8 (2017): 15037.

[5] Anumanchipalli, Gopala K., Josh Chartier, and Edward F. Chang. “Speech synthesis from neural decoding of spoken sentences.” Nature 568.7753 (2019): 493.

[6] Stringer, Carsen, Michalis Michaelos, and Marius Pachitariu. “High precision coding in mouse visual cortex.” bioRxiv (2019): 679324.

[7] Breedlove, Jesse L., et al. “Human brain activity during mental imagery exhibits signatures of inference in a hierarchical generative model.” bioRxiv (2018): 462226.

You must be logged in to post a comment.